How Soda Studio optimized a conversion funnel by 35% with Maze

Discover how Soda Studio used Maze to validate design variations and streamlined product iteration that led to increased user engagement.

About Soda

Soda studio is an Amsterdam-based UX & Product agency focusing on love user centered design and high conversion.

Industry

Tech & Software

Opportunity

Collect valuable user insights in order to validate design hypothesis and improve conversion funnel, right at the design stage.

Key Maze features used

Heatmaps

Prototype Testing

Share

35%

flow exit rate reduction

For digital studios Soda, Resoluut, and Milkshake, design is fundamentally a user-centric activity. At their core is a mission to build products that people love to use, upheld in practice by two of the most important parts of the design process: testing and research.

The trio is based in Amsterdam, the Netherlands, and works closely together on projects with clients such as MasterCard and CheapTickets.nl. A noticeable project is Tap To Go developed for Albert Heijn, the largest Dutch supermarket chain. The card allows buyers to shop cashless by tapping it on the product reader, a concept similar to Amazon Go.

During a recent project, the Soda Studio team used the Maze A/B testing feature to test the user flow of design and based on the insights collected reduced the exit rate from the flow by 35%.

Research, an essential part of the process

Each studio focuses on different yet equally important aspects of a good product. Soda Studio does UX and interaction design, while Resoluut is in charge of visual and brand design. The newest addition to the group is Milkshake, established solely for research purposes.

“For us, research was becoming such an important part of the design process that it was worth starting a new company just for that,” says Bo Merkus, UX researcher at Soda and Milkshake.

"We don’t want to deliver long research reports that no one is going to read. We want to offer clear, easy to share insights that people can use to improve a product. And Maze is really nice for that."

Bo Merkus

UX researcher at Soda Studio

Share

The Soda Studio team

During projects, the team mixes and works together collaboratively – research joins branding and design at the earliest stage, starting with the design proposal. This means designers work closely with UX researchers to quickly iterate based on new findings.

“In the traditional process of Analysis → Deployment → Live, we always try to incorporate testing. As a UX Researcher, I’m involved in projects to support the designer and see if what they design is understood by users,” says Bo.

Testing for difference

On a recent project, the Soda Studio team used the Maze A/B testing feature to test two variations of a design with users. The prevailing hypothesis was that the existence of a tab bar negatively impacted the completion rate and diverted users away from the flow.

To give users an introduction to the app and the testing experience, Bo set up the first task as a walkthrough for users to discover the feature.

After testers completed this first task, they were redirected to the second one, which was different for 50% of the test participants. Half of the users were shown the version with a tab bar, while the remaining half were given the test without it.

Deriving key insights based on results

The results of the first task showed that most users were able to find and recognize the functionality as the method to fix their problem. Bo was also able to view the exact path taken by users to complete the first task.

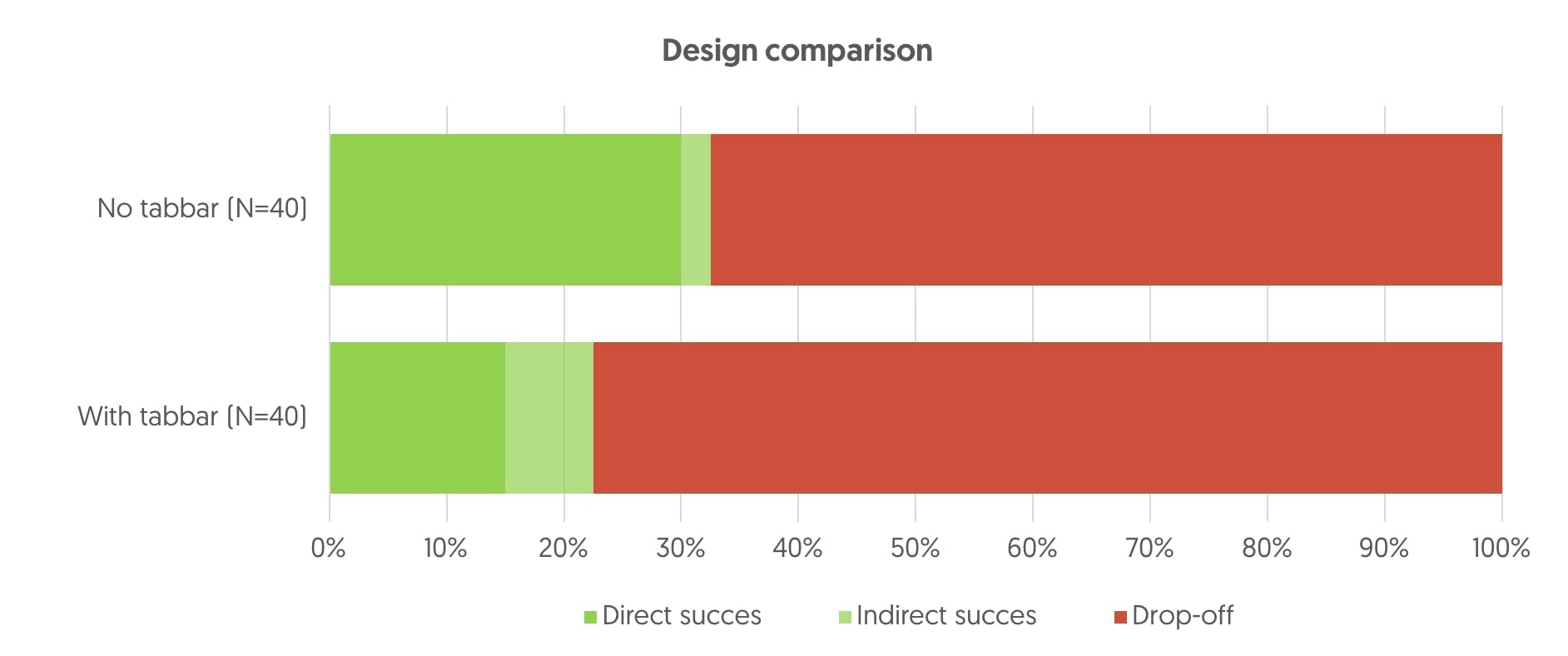

When they moved on to analyzing results from the A/B test, they uncovered a number of significant insights. The first conclusion Bo drew from the data was that the design without the tab bar had a higher success rate (32%) than the design with the tab bar (22%).

Of these successes, the design without navigation had a lower indirect success rate compared to the design with the tab bar. 30% of test participants completed the task via the direct route in the design with no tab in contrast to only 15% in the design with the tab.

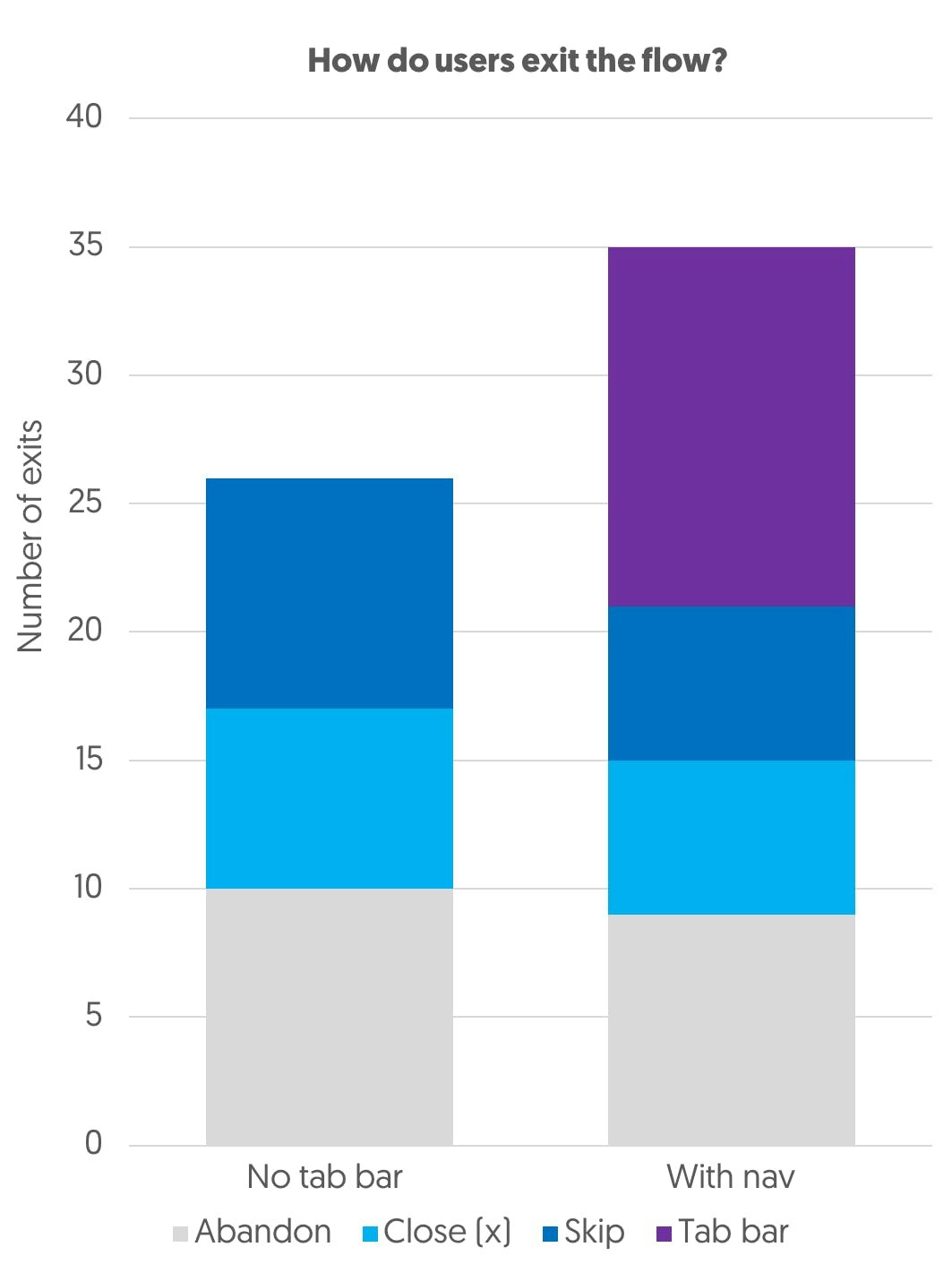

Additionally, a closer look at the click heatmaps for every screen revealed where exactly in the flow users dropped off.

“With the data from Maze, I was able to create graphs and show our client when users dropped off. There were more exits because people tapped the bar, so being able to locate where they tapped and how they left the flow was extremely useful,” confirms Bo.

The key insight was that the highest proportion of exits (40%) in the design with the tab bar stemmed from users tapping it. Importantly, the design with the bar resulted in 35% more exits from the flow than the design without it.

By testing their initial assumption, the team collected valuable data to validate their hypothesis and implement an optimized conversion funnel right at the design stage.

"Maze enables me to get insights into how people use a prototype. I can conduct A/B testing without having something developed or live. Plus it's very intuitive and easy to use."

Bo Merkus

UX researcher at Soda Studio

Share