Anthropic just ran 81,000 AI-moderated interviews across 159 countries in a single week. No human moderator in the room. Adaptive follow-up questions, 70 languages, and open-ended conversations at a scale no typical research team could realistically match.

It’s an impressive milestone, but it also raises a new question: as artificial intelligence (AI) takes on more research, how do you decide what to trust with it, and where human judgment is valuable?

Research demand has climbed 66% in the past year alone. You’re being asked to do more, with higher stakes, and less time. You can’t be in every session or review every interview—not when you’re jumping between studies or scrubbing through hours of footage to find the insight that drives progress.

The way forward isn’t doing everything yourself; it’s knowing where you’re most needed and having the confidence to step in when it counts. For many product teams and researchers, that’s where AI comes in. So, what parts of user research can you confidently hand off to AI—and still trust what comes back?

AI is baseline, so where you step in matters

For most teams, AI has crossed from experimental to essential. Nearly 69% of researchers automate at least some of their workflows—from aligning on study goals and drafting questions to analyzing data—a 19% increase from last year. What used to feel like an advantage has quickly become expected.

As artificial intelligence takes on more of the execution, the role of the researcher becomes more defined. Not every part of user research needs you in the same way. Some parts of research scale naturally; others don’t.

Be intentional about where you show up:

You’re strongest where things get messy. Unlike AI tools, humans scale meaning—you can interpret nuance, decide what matters, and shape direction. You can read the room, understand body language, and ultimately define intent.

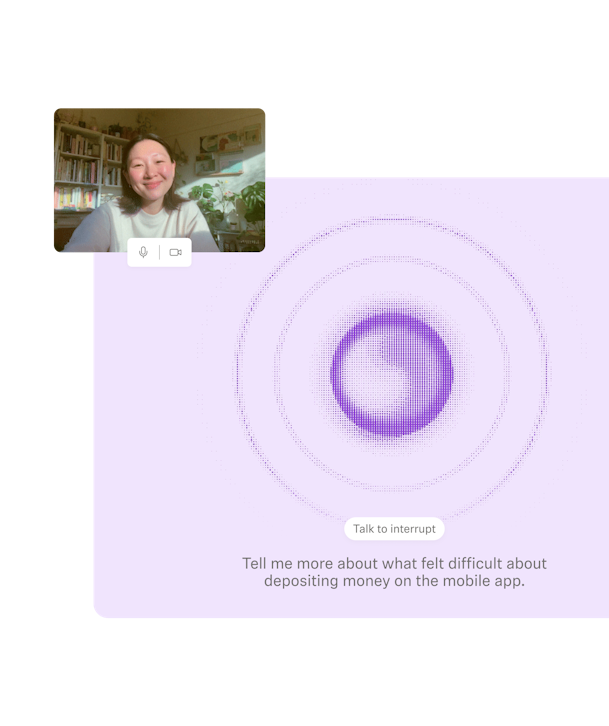

AI moderation is strong where consistency matters. AI can scale your research by running studies autonomously, asking the same questions every time, and probing without fatigue. Every participant gets the same depth and structure that you set, grounded in your research goals.

The most forward-thinking organizations are using automation to dynamically handle repetitive research and data analysis, while guiding human researchers towards more ambiguous or emotionally complex aspects of user research.

Not every study needs you in the room

The real skill is knowing where your involvement changes the outcome. When you find your balance between human vs. AI in research moments, you’ll quickly see where you make the biggest impact.

Some studies are exploratory and emotionally complex, where a skilled human moderator demonstrates their value by reading the room, adjusting in real time, and knowing when to go off-script. Other studies have a clear stimulus, a defined goal, and questions that benefit from consistency and depth—exactly where AI can take the lead.

Before automating a study, ask yourself three things:

- Is the study structured and well-defined? If your questions are clear, your stimulus is ready, and you know what you're trying to learn, you’ve already done the hard part. Automated moderation works best with strong direction from you.

- Does consistency matter more than spontaneity? Concept validation, message testing, and benchmarking depend on every participant having the same experience. AI doesn't have an off day, it doesn’t get tired, and it won’t accidentally probe one participant deeper than another.

- Are you trading off depth for time? If you don't have the bandwidth to moderate every session, AI moderation lets you run more research without stretching yourself thin. You stay in control of the design and analysis—the execution doesn’t have to sit with you.

If you're answering yes to most of these, you're looking at a strong candidate to pass to AI.

💡 If you’re thinking about integrating AI further into your workflows, consider strengthening your trust and accountability by using research-grade AI.

What this looks like in practice

As AI moderators expand into more types of research workflows, you start to see their value show up in practical ways. When more research can happen in one place, more people can ask better questions and get answers they can act on.

Example 1: A product team validating a new concept before committing resources

The study is structured, the stimulus is clear, and the goal is to understand how people interpret what they’re seeing. AI moderation handles the conversations—asking consistent questions, probing for clarity—while the researcher focuses on what the patterns mean and whether the direction holds.

Example 2: A marketing team testing messaging before launch

Instead of relying on internal opinions or waiting for performance data, they’re hearing how users make sense of different value propositions—what feels clear, what feels vague, and what actually builds trust. The depth is there, but without the overhead of moderating every session.

Example 3: A team investigating friction in an onboarding flow

They already know where users drop off. What they need is context. With structured prompts and screen-sharing, participants walk through the experience while being guided in the moments that matter—surfacing confusion, hesitation, and mismatched expectations.

Every participant gets the same level of attention, the same depth of questioning, and the same space to explain their thinking. That consistency is what makes the insights easier to trust, because they’ve been explored with the same level of care.

And that’s where the balance starts to click.

As the researcher, you’re still defining the goals and direction. You’re still deciding what’s worth asking, what matters in the data, and the decision-making in what happens next. The thinking doesn’t move; the human review and human oversight are still there. It’s the execution that doesn’t always have to sit on your plate anymore.

The most impactful systems won’t replace human judgment—they’ll extend it, helping teams solve more complex problems faster, across increasingly complex environments.

Netali Jakubovitz

VP Product @ Maze

The new standard for credible research

AI-moderated research is already part of how modern teams work. Strong research comes down to choosing the right method for the question.

Automation is great for speed, scale, and consistency. It helps you run repeatable studies and surface patterns quickly across large groups. But some work still needs a human touch—especially when nuance, sensitivity, or deeper context is involved. You don’t need to be in every study to stay in control. You need to know where your involvement actually changes the outcome.

Researchers who get this right are the ones who can make that call with confidence. They choose the right level of oversight and stand behind their findings with confidence. That’s the standard now.