For researchers, Maze is where the evidence is captured—showing how users move through an experience: where they hesitate, what they choose, and where they drop off. That evidence has always mattered because it’s observable, measurable, and easy to act on.

But knowing what happened is only part of the picture. The bigger question is why.

Why did someone pause there? Why did one concept resonate and another fall flat? Why did they complete the task, but still sound unsure? Those are the questions that shape product decisions, creative direction, and strategy—and when you can’t answer them, decisions still get made, but without knowing if they’re the right ones.

Getting to the why isn’t always easy. It usually means a separate study, extra budget, and time from a researcher. When those don’t line up, the why gets lost—and data on its own rarely tells a story strong enough to act on.

Maze’s AI moderator was built to close that gap.

From open conversations to evaluative depth

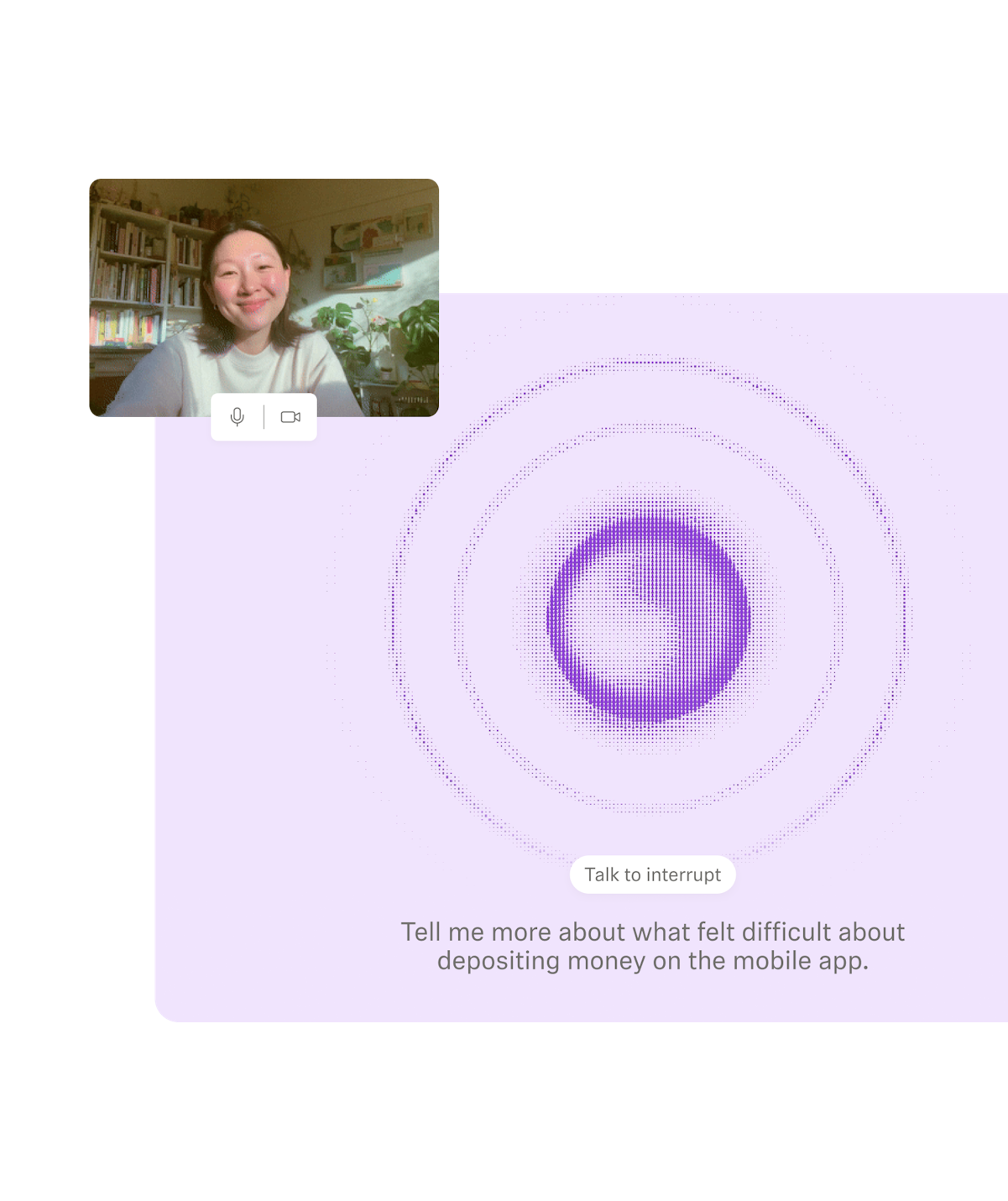

AI moderator launched last year as a new way to run interviews, designed for moments when teams needed the depth of a conversation, without the delays that usually come with one.

Built on research-grade AI, the moderator works the way researchers do: grounded in clear goals, asking unbiased questions, and adapting in real time to what participants share. Instead of following a rigid script often seen in AI moderation tools, it’s intentionally designed to be conversational, while staying anchored to your objectives—asking relevant follow-up questions, probing deeper when needed, knowing when to pivot, and uncovering the reasoning behind what people say. It feels less like a tool and more like a capable researcher running the conversation with you.

That showed up quickly in how teams started using it. One team ran 200 interviews in just 10 days—something that would have taken weeks with traditional moderation—while another reached a niche audience, capturing candid feedback that likely wouldn’t have surfaced with a human moderator. The result is interview-level depth at a scale that wasn’t previously practical.

That was the foundation.

Today, teams are using AI moderator across a wider range of studies. We’ve now added visual stimulus testing, flexible discussion styles, and—very soon—screen-sharing, expanding AI moderator beyond conversational research into the evaluative methods teams rely on every day. Instead of switching tools or running separate studies, you can move from exploration to evaluation in one place, keeping the context of your research intact.

The research you’re already doing goes deeper, and the questions that once required a separate study can now be answered in the same one.

Get clear on what lands and understand why

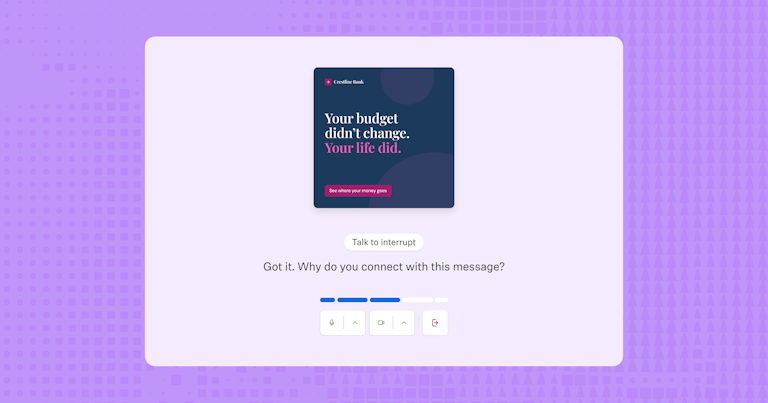

With visual stimulus testing and screen-sharing, you can now put concepts, designs, copy, and experiences in front of participants and hear how they respond in real time.

Getting reactions is easy, but understanding what’s behind them isn’t. Until now, getting to that why often meant scheduling a moderated session or spending hours reviewing recordings and piecing the story together yourself.

AI moderator follows up on what participants see and say, using the stimulus to probe where it matters. With screen-sharing, it adapts to how people naturally explore—stepping back while they navigate, then digging into the reasoning behind what they did. The focus stays the same: helping you reach your research goals with insights you can act on, not just surface-level reactions.

You can now:

- Test brand and creative with the depth of a real conversation

- Compare copy variations and hear what shaped each response

- Validate design directions with both user reactions and reasoning behind them

- And soon, test live experiences in real time, from competitor flows and prototype walkthroughs to checkout journeys and more

Go from idea to feedback in a single day with a clear understanding of what to do next.

Run AI-moderated research your way with full control

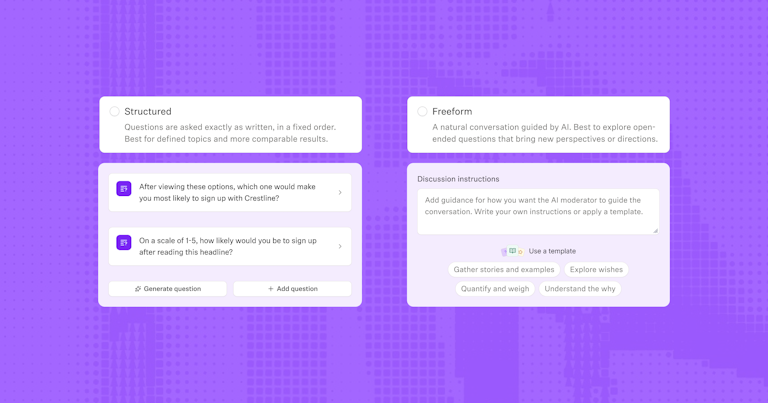

Most AI research tools force a tradeoff: a rigid structure that gives you consistency, or open-ended exploration that uncovers depth—but rarely both. Research doesn’t follow a single format, and now your studies don’t have to either.

With more flexible discussion styles, you can shape how AI moderator runs each conversation based on your research objective, and whether you’re exploring broadly or validating something specific.

That means you can:

- Explore with freeform goals: Let the moderator adapt naturally to uncover behaviors, motivations, and unfiltered first impressions

- Validate with structured goals: Ask up to five fixed questions with controlled follow-ups so every participant is asked the same questions consistently, and patterns emerge

You can also combine both in a single study: starting with open exploration, then shifting into structured validation. No need to adjust your methodology to fit the tool. You can even attach custom discussion instructions at the goal or question level to shape exactly how the AI probes and follows up.

The result is a moderator that knows when to explore and when to hold the line—so you get both depth and consistency in the same study.

Research that works across your team

As AI moderator expands into more types of research, it becomes useful in more moments across the team—for the everyday questions that come up as work moves forward.

- Researchers can take on more work without compromising rigor, with guardrails built into every conversation

- Designers can test early concepts and directions before committing, with both real reactions and the reasoning behind them

- Product managers can validate ideas and live experiences with evidence that moves roadmaps forward, grounded in user insight, not assumptions

- Marketers can test messaging, copy, and creative at scale, and hear the exact language customers use to describe what resonates

The outcome is consistent across every role: faster decisions, grounded in real insight. When teams can understand both what users do and what’s behind it, decisions move forward with greater clarity.

📖 When AI takes on more of the execution, the researcher's role becomes more defined. The next research skill: Knowing when to use AI with confidence, explores exactly where your involvement still changes the outcome.

Research-grade AI you can trust

AI adoption is now mainstream. 69% of researchers now use AI in at least some of their work—up 19% from last year. But it hasn’t replaced the full research workflow.

82% of researchers say interpreting nuance and emotion still requires human judgment. That hesitation is grounded in how research actually works: it depends on asking thoughtful questions, capturing meaning accurately, and understanding what holds up under scrutiny. Every part of AI moderator is designed with that in mind.

Human review is most valuable in the messy middle. Where you’re connecting what customers said to what the organization should do about it.

Amanda Gelb

Strategic Researcher @ Aha Studio Inc.

Grounded in a researcher’s mindset

AI moderator starts with your goals and follows the intent of your study, adapting to what participants share and uncovering the reasoning behind their responses. It approaches each conversation with the same instincts an experienced researcher brings to an interview—knowing when to dig deeper, when to move on, and how to keep the discussion on track.

Built with quality guardrails from the start

Research integrity is built into how the system works. Powered by advanced AI models and thoughtfully designed, AI moderator avoids leading questions, and never validates, or reframes participant responses. These explicit guardrails help avoid common research pitfalls before they happen.

Tested for consistent, real-world performance

Research-grade means performing reliably in the conditions research actually happens in—messy, nuanced, and often unpredictable. AI moderator is evaluated across 25 quality metrics and continuously refined based on real usage and feedback, so performance holds up beyond controlled scenarios.

The platform you trust, now with more depth

This launch reflects a broader shift in how teams approach research.

Our 2026 Future of User Research Report found that the number of teams who see research as essential to business strategy has nearly tripled in a single year. That kind of shift doesn’t come from running more studies—it comes from having the reasoning behind the data, the kind people can use to make decisions with confidence.

Maze has always shown you what happened. Now it helps you understand why.

When that why is easier to access, research becomes part of how decisions get made—rather than a step along the way.

What to know about AI moderation

What is AI moderation?

What is AI moderation?

AI moderation uses artificial intelligence, including LLM-based models, to guide and scale research conversations—handling real-time follow-up, consistency, and execution across participants. Maze’s AI moderator takes a different approach. It’s built on research-grade AI—designed to think like a researcher, ask unbiased questions, and stay grounded in your study goals. Instead of optimizing for output alone, it prioritizes research rigor, context, and reliability, so you can scale research workflows without losing the depth and quality that make insights trustworthy.

How is Maze’s AI moderator different from other AI moderation tools?

How is Maze’s AI moderator different from other AI moderation tools?

Maze’s AI moderator is purpose-built for research. Unlike generic AI moderation tools, it follows your research goals, adapts in real time, and avoids leading questions. It combines the strengths of human moderators—like contextual understanding and nuance—with the scale and speed of automation, so you can run high-quality studies without sacrificing depth.

What types of research can Maze’s AI moderator support?

What types of research can Maze’s AI moderator support?

AI moderator supports both exploratory and evaluative research through flexible AI moderation workflows, including:

- AI-moderated interviews

- User experience research

- Concept and design testing

- Copy and messaging validation

- Voice of customer feedback

- Live website and prototype testing (coming soon)

It allows teams to run multiple research workflows in one place, without switching between tools or compromising on quality.

How much control do researchers have with AI moderator?

How much control do researchers have with AI moderator?

Researchers stay in full control. You define what you want to learn, set your interview goals, and shape the discussion. AI moderator handles the execution—asking real-time follow-up questions, probing deeper, and keeping conversations on track based on your original intent. This is where AI moderation works best: automation supports the process, while researchers lead the thinking.

Can AI moderator replace human moderators?

Can AI moderator replace human moderators?

No—AI moderator is designed to support researchers, not replace them. While AI moderation tools can scale execution, human moderators remain essential for interpreting nuance, making judgment calls, and guiding research strategy. As AI takes on more operational work, the role of the researcher becomes even more important.