Great user research starts with a clear question and a way to answer it.

For most teams, that second part is where things slow down. The question is already there. A flow to validate, a drop-off to understand, or a decision that would feel more grounded with real user input. Turning that into a study is where it becomes less certain.

Demand for research has increased by 20%, yet for non-researchers, starting can feel unclear. A blank study doesn’t offer much guidance, and small decisions—how to structure a task, run usability testing, or phrase a follow-up question—can carry more weight when you’re not sure what “good” looks like. For researchers, that same moment shows up as volume and risk. Reviewing setups, rewriting questions, and making sure studies meet a high bar is essential work, but it’s repetitive and difficult to scale.

In both cases, time gets spent before the study has even begun. And when setup takes too long, research happens less often than it should, at a time when anyone can build, and understanding your users is what makes the difference.

Maze’s AI study builder was built for this moment, so anyone can move from question to a research-ready study, with the standards already built in.

From question to structured research

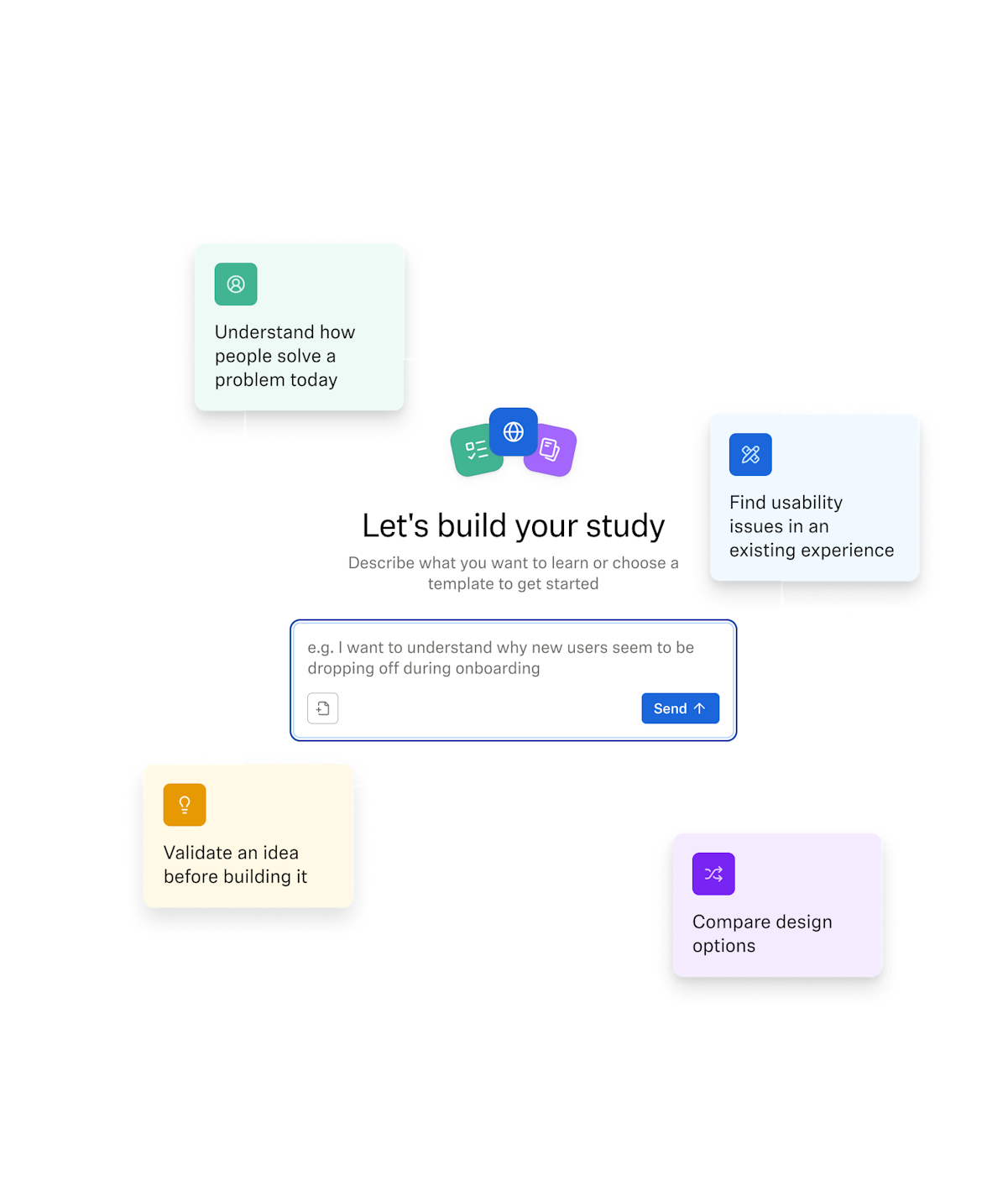

Instead of beginning with a blank page, you begin with what you want to learn. A question, a goal, or an existing plan. From there, Maze builds out a structured unmoderated study—with methodology, question flow, and settings—all based on that input.

You can start with a prompt, choose from prompt templates for common use cases like usability testing or prototype testing, or upload a study plan. The workflow stays collaborative. If more context is needed, the AI study builder asks for it: about your audience and their demographic, your goals, or the decision you’re trying to support. Those details shape how the study is built before anything is finalized.

Once the study is there, it doesn’t lock you in. You can refine questions, iterate on the approach, or rethink the scope without starting over. The structure is there to guide you, and remains flexible.

- Start: Turn a question, template, or plan into a structured study

- Refine: Adjust and improve, with the builder’s guidance

- Review: Check your methodology, questions, and settings

- Launch: Go live when it’s right and start collecting insights

What changes is how you start. You’re no longer building a study from scratch; you’re shaping one that’s already rooted in a researcher-defined best practices.

Research that holds up across every team

Good user research relies on structure, sequencing, and neutrality—the right methodology for the goal, and a clear flow that avoids bias and leads to results you can trust.

As more teams run studies, maintaining research quality becomes harder. Researchers often step in to review setups, refine questions, and ensure the output is reliable. That work is essential, but it’s also repetitive and difficult to extend across the organization when every study depends on hands-on support.

What changes with Maze’s AI study builder is how that standard shows up. Instead of relying on expertise every time, the structure behind good research is applied from the start. Every study follows a consistent flow, best practices are built in, and the output doesn’t depend on how familiar someone is with the methodology—it reflects it by default.

That consistency makes it possible to run more studies without lowering the bar:

- Researchers and their methodology become the default, at scale

- Product managers and designers can run research on their own timeline

- Marketers can easily validate messaging or concepts before committing to a budget

- Leadership can scale research tools, knowing decisions are grounded in evidence

When more people can run studies that hold up, research becomes part of how teams make informed decisions day-to-day, giving them the confidence to shape direction rather than react to it.

AI study builder removes the friction from getting research off the ground. It takes you from an idea to a launch-ready study in minutes, while keeping your research question at the center and guiding you toward the right methodology. It’s not just about enabling more people to run studies—it’s about making high-quality research the default, so teams can move faster without compromising on standards.

Noel Gee

Head of Research Partner Program at Maze

Where AI supports, and teams bring judgment

AI may be mainstream, but it hasn’t replaced the full research workflow. 82% of researchers say interpreting nuance and emotion still requires human judgment—reflecting what research actually demands: understanding context, reading between the lines, and deciding what matters.

Maze’s research-grade AI is designed with that in mind. It protects what makes user research valuable, while supporting the parts that tend to slow teams down. For the AI study builder, every study is evaluated against a set of quality metrics—covering clarity, bias, structure, and flow. Instead of spending time correcting study setups or catching avoidable issues, researchers can trust that foundational quality is already in place from the start.

That foundation creates space for the work that matters most: making sense of what participants share, connecting insights to product direction, and deciding what to do next. That’s where judgment has the greatest impact, and it stays firmly human.

The AI study builder helps teams reach that point faster, so more time goes into understanding users, not setting up studies.

The best thing a researcher can do isn’t to run every study, it’s to make sure great research happens without them. When every role becomes a contributor to insight, organizations stop relying on personal assumptions and start learning from the people who matter most: their ideal customers. The AI study builder turns that into the default: research-grade quality, for every team, every time.

Matthieu Dixte

Senior Product Researcher at Maze

Better decisions start with research

Our 2026 Future of User Research Report found that the number of teams that see research as essential to business strategy and decision-making has nearly tripled in a single year.

The intent is clear: more teams want to run research, more often, and use it to guide product development. But what’s been missing is a way to do that consistently.

When research tools don’t keep pace with how teams work, user feedback arrives too late or not at all. When they do, research becomes part of the flow: easier to start, easier to run, and easier to trust.

Maze’s AI study builder brings everything together—so research keeps pace with your work, and decisions aren’t made without it.

What to know about AI moderation

What types of research can Maze’s AI study builder support?

What types of research can Maze’s AI study builder support?

Maze’s AI study builder supports a range of unmoderated research use cases—from usability and prototype testing, card sorting, tree testing, concept validation, and messaging feedback. You can start with a question, a template, or an existing plan, and shape the study to fit your goal. Whether you’re testing a flow, exploring a new idea, or gathering user feedback, the structure adapts to how you want to run your research.

How much control do I have over the study it generates?

How much control do I have over the study it generates?

You stay in control throughout. The AI study builder gives you a strong starting point, not a finished answer. You can refine questions, adjust the methodology, and change the structure at any time. Every update happens in real-time with context, so you can shape the study as you go without having to start over.

What is research-grade AI?

What is research-grade AI?

Research-grade AI is built to support high-quality research, not just fluent conversations. It’s designed to avoid leading questions, minimize bias, and stay grounded in what participants actually say. In Maze, every conversation is tied back to your research goals and evaluated against 25 quality metrics to help keep insights consistent and reliable.

How does Maze ensure quality and privacy when using AI?

How does Maze ensure quality and privacy when using AI?

Maze partners with trusted AI providers to power our features. Our AI providers do not use Maze customers’ data sent through the API (such as your results, Clips recordings, or other types of data) to train their models or improve their services. Read more about Maze’s AI models and your privacy here. You can find additional information about our security measures here.

Can AI replace human researchers?

Can AI replace human researchers?

No—AI-powered tools and moderators are designed to support teams, not replace them. While tools can scale execution, humans remain essential for interpreting nuance, making judgment calls, and guiding research strategy. As AI takes on more operational work, the role of the researcher becomes even more important.